Latent space

Latent space

In contrast to pixel space where users engage with AI images perceptually, the latent space is an abstract space internal to a generative algorithm such as Stable Diffusion. It can be represented as a transitional space between the collection of images in a datasets and the generation of new images. In the latent space, the dataset is translated into statistical representations that can be reconstructed back into images. As explained by Joanna Zylinska:

In Stable Diffusion, it was the encoding and decoding of images in so-called ‘latent space’, i.e., a simplified mathematical space where images can be reduced in size (or rather represented through smaller amounts of data) to facilitate multiple operations at speed, that drove the model’s success.[1]

It is useful to think about that space as a map. As Estelle Blaschke, Max Bonhomme, Christian Joschke and Antonio Somaini explain:

A latent space consists of vectors (series of numbers, arranged in a precise order) that represent data points in a multidimensional space with hundreds or even thousands of dimensions. Each vector, with n number of dimensions, represents a specific data point, with n number of coordinates. These coordinates capture some of the characteristics of the digital object encoded and represented in the latent space, determining its position relative to other digital objects: for example, the position of a word in relation to other words in a given language, or the relationship of an image to other images or to texts.[2]

The relation between datasets and latent space is a complex one. A latent space is a translation of a given training set. Therefore, in the process of model training, datasets are central and various factors such as the curation method or scale have a great impact on the regularities that can be learned and represented into vectors. But a latent space is not a dataset's literal copy. It is a statistical interpretation. A latent space gives a model its own identity. As WetCircuit (AKA Cutscene Artist), a prominent user and author of tutorials of the Draw Things app puts it, a model is not "bottomless" [3] This is due to the fact that the model's latent space is finite and therefore biased. This is an important argument for the defense of a decentralized AI ecosystem that ensures a diverse range of worldviews.

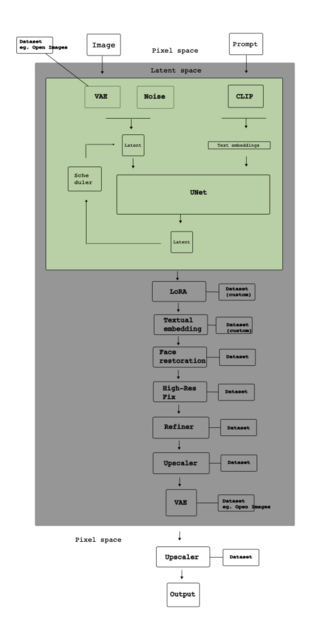

The multiplication of models (and therefore their latent spaces) produces various kinds of relations between them. In current generation pipelines, the images are rarely the pure products of one latent space. In fact, there are different components intervening in image generation. Small models such as LoRAs add capabilities to the model. The software CLIP is used to encode user input. Other components such as upscalers add higher resolution to the results. Therefore, there are many latent spaces involved, each with their own inflections, tendencies and limitations. Another important form of relation occurs when one model is trained on top of an existing one. For instance, as Stable Diffusion is open source, many coders have used it as a basis for further development. In CivitAI, on a model page, there is a field called Base Model which indicates the model's "origin". As models are retrained, their inner latent space is modified, amplified or condensed. Their internal map is partially redrawn. But the resulting model retains many of the base model's features. The tensions between what a new model adds to the latent space and what it inherits is explored further in the LoRA entry.

The abstract nature of the latent space makes it difficult to grasp. The introduction of techniques of prompting, text-to-image, has made possible to query to explore that space using natural language. And the ability to use images as input to generate other images, image-to-image, has opened a whole field of possibilities for queries that may be difficult to formulate in words. This multi-modal quality of existing models is in part possible because other components such as the variational autoencoder and CLIP can transform various media such as texts and images into vectors. In pixel space, images and texts belong to different perceptual registers and related to different modes of experience of the world. Once encoded as latent representations, they are both treated as vectors and participate smoothly to the same space.

For a model to work, it needs to be able to understand the semantic relationship between text and image. Say, how a tree is different from a lamp post, or a photo is different from a 18th century naturalist painting. The software CLIP (by OpenAI) is widely used for this, and also by LAION. CLIP is capable of predicting what images that can be paired with which text in a dataset.

Once trained, CLIP can compute representations of images and text, called embeddings, and then record how similar they are. The model can thus be used for a range of tasks such as image classification or retrieving similar images or text.[3]

The annotation of images is central, here. That is, for the dataset to be useful there needs to be descriptions of what is on the images, what style they are in, their aesthetic qualities, and so on. Whereas ImageNet, for instance, crowdsources the annotation process, LAION uses Common Crawl to find html with <img> tags, and then use the Alt Text to annotate the images (Alt Text is a descriptive text acts as a substitute for visual items on a page, and is sometimes included in the image data to increase accessibility). This is a highly cost-effective solution, which has enabled its community to produce and make publicly available a range of datasets and models that can be used in generative AI image creation.

As also explained in maps, and very briefly put, the diffusion models function by encoding images with noise (using a Variational Autoencoder, VAE), and then learn how to de-code them back into images.

For a more complete discussion of latent space see therefore also diffusion and maps.

[1] Joanna Zylinska, “Diffused Seeing: The Epistemological Challenge of Generative AI,” Media Theory8, no. 1 (2024): 229–258, https://doi.org/10.70064/mt.v8i1.1075.

[2] Blaschke, Estelle, Max Bonhomme, Christian Joschke, and Antonio Somaini. “Introduction. Photographs and Algorithms.” Transbordeur 9 (January 2025). https://doi.org/10.4000/13dwo.

[3] HOW DO YOU QUOTE A DISCORD MESSAGE?

[4] Maximilian Schreiner, “New CLIP Model Aims to Make Stable Diffusion Even Better,” The Decoder, September 18, 2022, https://the-decoder.com/new-clip-model-aims-to-make-stable-diffusion-even-better/.